Maybe We Should Focus On Essential Complexity

Fred Brooks wrote his famous paper No Silver Bullet - Essence and Accident in Software Engineering 30 years ago. I think I personally heard about the paper first time when listening a talk by J.B. Rainsberger. He talked about the concept of essential and accidental complexity.

Where essential is the complexity of the problem we are trying to solve. The part that we can’t get rid of. Space X and Hyperloop are good examples for cases where there exists a lot essential complexity.

Accidental complexity on the other hand is complexity that could be avoided. It’s about us. People developing the system. And the lack of skills (or discipline or whatever reason) to develop the system in a way that doesn’t cumulate technical debt in the long term.

How essential is essential?

This is a good question though. Is essential that essential in the end? Let’s see what Brooks also wrote in his paper:

Hence we must consider those attacks that address the essence of the software problem, the formulation of these complex conceptual structures. Fortunately, some of these are very promising.

…Therefore one of the most promising of the current technological efforts, and one which attacks the essence, not the accidents, of the software problem, is the development of approaches and tools for rapid prototyping of systems as part of the iterative specification of requirements.

Even Brooks discussed in his paper about attacking the essential complexity. Considering that it’s 30 years since he wrote the paper, I don’t know how far we’ve come since then.

If we put Space X & Hyperloop aside and consider our everyday essential complexity, which would be what client / customer is asking from us and that we end up building. Every feature you build will increase the complexity of your overall solution. If you’re really good at your work, then perhaps you will be able to keep that complexity not increasing too quickly.

But how large proportion of all of those features that you’ve added (or changed) to the product, will cause a verified impact to the behaviour of the people whose life the product touches? Considering the increase in the complexity (e.g. code, tests, documentation), we should aim at maximising the probability of having a real desired impact to the lives of people who use our product for solving their problems. Even more important - we should have some kind of hypothesis when we are coming up with idea about a feature to add. What kind of impact will it cause? How can we conclude that it was worth building?

We should widen our scope of focus

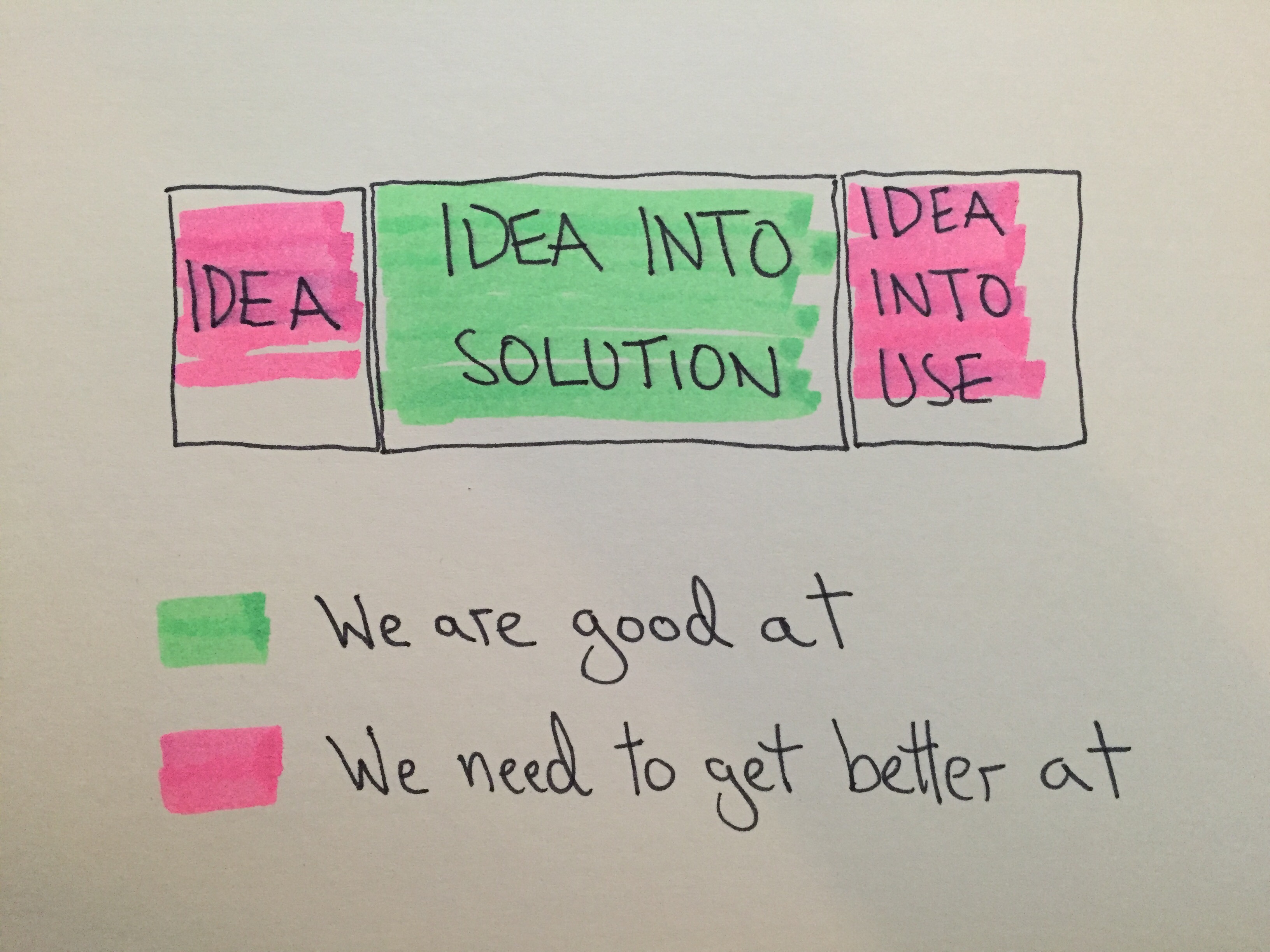

Based on my short experience in this industry I’ve seen focus being mainly something like this.

We are getting really good at turning those ideas into more realiable and closer-to-goal solutions. While cutting down the lead time and tightening the collaboration.

But I still think there’s a lot room for improvement. Especially before we start building and when we deploy those ideas into use.

Before we start building

I asked people on Twitter if they know approaches that help people explore ideas when trying to figure what to build. With the help of my fellow tweeps, I was able to list several approaches for potentially cutting down that essential complexity when people are just coming up with ideas on what to build.

Here’s a list of these approaches (I would appreciate to hear if you have something to add):

7 Product Dimensions https://www.youtube.com/watch?v=x9oIpZaXTDs https://www.discovertodeliver.com/index.php https://www.ebgconsulting.com/Pubs/Articles/StrengthenYourDiscoveryMuscle(Gorman-Gottesdiener).pdf

Impact Mapping] https://github.com/impactmapping/open-impact-mapping-workshop/tree/master/educational-workshops/concerts-online

Example Mapping https://lisacrispin.com/2016/06/02/experiment-example-mapping/

**Validate Your Ideas (experiments) with the Test Card** https://www.youtube.com/watch?v=cW46ySJmLD8

Story Mapping https://www.thoughtworks.com/insights/blog/story-mapping-visual-way-building-product-backlog

Lean Canvas https://leanstack.com/lean-canvas/

Elephant Carpaccio Elephant Carpaccio facilitation guide

Product Tree https://igoprod2.wpengine.com/prune-the-product-tree/ https://www.agilealliance.org/resources/videos/awesome-superproblems/

When we’ve deployed ideas into use

This is a more challenging one and depends on what kind of technology stack you are operating with. Nevertheless, if we don’t investigate in anyway how the features that we deploy, impact to the lives of people who use our product - how will we know that we have deployed something that was actually valuable?

Another aspect comes from our ability to come up with ideas that provide value for people who use the product. If we don’t validate those ideas, how do we know if we’re any good at coming up with ideas on what to implement? Awareness comes from having visibility.

I recently had a lunch discussion about this topic and we were pondering about what to do if feature wasn’t useful? This is where it depends on what kind of technology you are operating with. In the end you would need to have a possibility to take away features that were not valuable. And this could be couple months after it was deployed to production (if it takes time to validate the hypothesis).

What next?

The point I was trying to make was that don’t take essential complexity as a law of nature. It’s a terrain that isn’t fully explored yet. Whether it’s about exploring those ideas before we start iterating them or validating them afterwards in production.

This subject and those pink colored areas on the picture are something that I’m planning to especially (not forgetting the middle part) focus on my current employer. Let’s see where it will take us.